Introduction to SEO Evolution

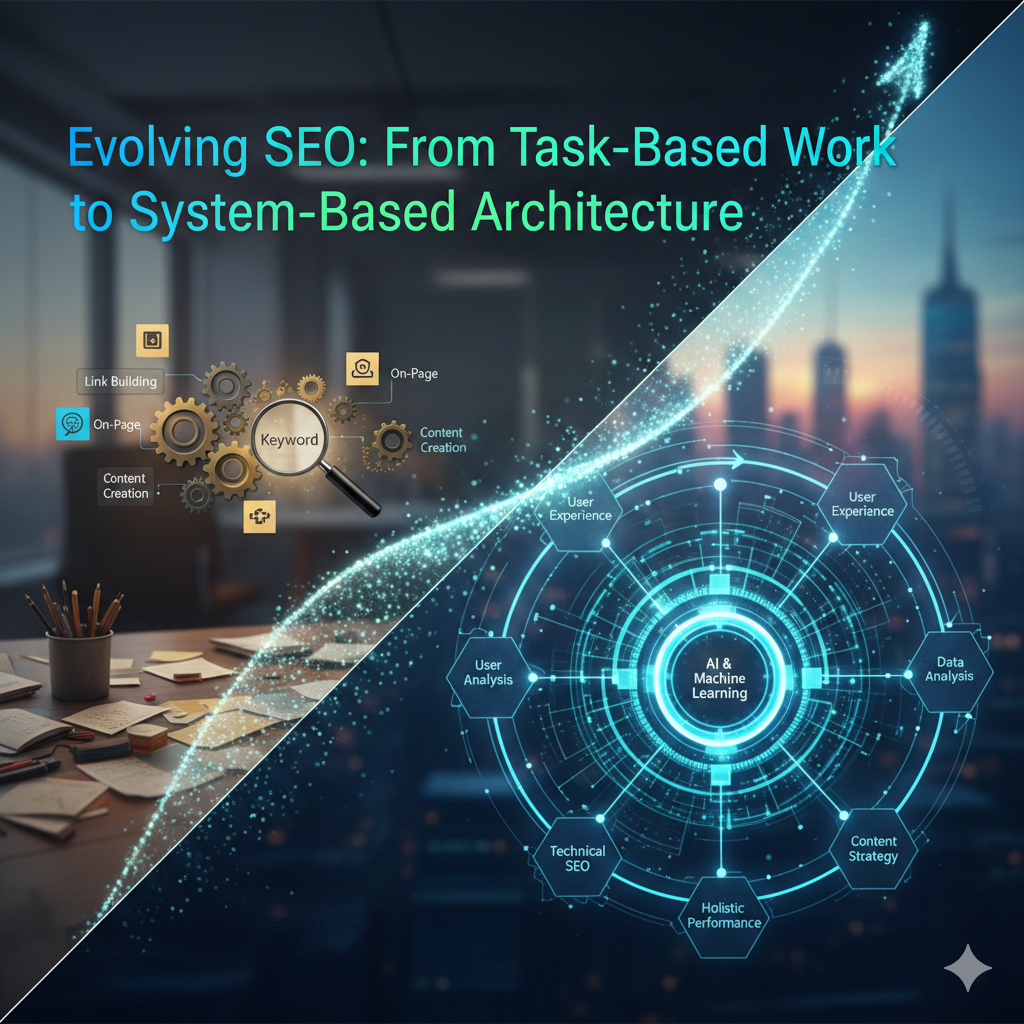

The shift from Task-Based SEO to System-Based Architecture represents the professionalization of the industry. In 2026, isolated optimizations are “table stakes”; true growth comes from owning a self-sustaining ecosystem.

1. Task-Based SEO (The Foundation)

Historically, SEO was a checklist of independent “plumbing” tasks.

- Linear Execution: Keyword research $\rightarrow$ Content creation $\rightarrow$ Link building.

- Siloed Metrics: Success was measured by individual keyword rankings or backlink counts.

- The Risk: Without a system, these tasks become “dead weight” when algorithms shift toward Answer Engine Optimization (AEO).

2. System-Based Architecture (The Future)

Modern SEO treats a website as a single, interconnected entity. This framework prioritizes the relationship between technical performance, content clusters, and user signals.

- Integrated Pillars: SXO (Search Experience), GEO (Generative Engine Optimization), and Technical AI-Readiness function as a unified engine.

- Entity-First Logic: Instead of targeting strings (keywords), systems build Topical Maps to establish the site as a recognized entity in the Knowledge Graph.

- Adaptive Growth: The system uses real-time data to self-correct, ensuring visibility across traditional search and AI agents like Gemini or Perplexity.

The Performance Governor’s Comparison

| Feature | Task-Based (Isolated) | System-Based (Integrated) |

| Focus | Rankings for specific pages | Visibility across the “Search Everywhere” ecosystem |

| Technology | Manual spreadsheets & basic tools | n8n automation, JS-based audits, & AI orchestration |

| Trust Signal | Link volume | E-E-A-T verified by machine-readable structure |

| ROI | Short-term traffic spikes | Compounding growth & lower blended CAC |

Why This Matters for 2026

Search engines are now Answer Engines. If your SEO isn’t a “system,” AI agents cannot easily parse, chunk, and cite your content. To win in this landscape, your strategy must move from producing pages to governing a trusted authority engine.

Understanding Task-Based vs. System-Based SEO

As a Technical Growth Architect, the transition from “Tasks” to “Systems” is the difference between manual labor and scalable automation.

1. Task-Based SEO (The Linear Approach)

Focuses on discrete, singular actions like keyword placement, individual backlink outreach, or meta-tag updates.

- Weakness: Operates in silos. Changes are manual, making it impossible to scale across enterprise-level sites without increasing headcount.

- Risk: Highly reactive; a single algorithm update can dismantle isolated optimizations.

2. System-Based SEO (The Scalable Architecture)

Treats SEO as an integrated engine where technical health, content clusters, and user signals function as a unified ecosystem.

- Strength: Leverages n8n automation and JavaScript to handle repetitive tasks, allowing the system to self-correct and adapt.

- The “Academic Bridge”: By automating the monitoring of Consumer Trust Signals, the system ensures that E-E-A-T remains a constant factor rather than a one-time audit.

The Performance Governor’s Verdict

| Feature | Task-Based SEO | System-Based SEO |

| Primary Goal | Check off a “To-Do” list | Build a scalable growth engine |

| Methodology | Manual & Linear | Automated & Integrated |

| Scalability | Low (Limited by human hours) | High (Driven by technical stack) |

| ROI Impact | Short-term rankings | Long-term business sustainability |

The Role of Local Development Environments

For a Technical Growth Architect, the Local Development Environment is the “engine room.” It allows you to build, break, and automate SEO systems on Windows 11 without risking live production environments or hitting rate limits on browser-based tools.

Why Local-First Matters for Scalability

- Risk-Free Iteration: Test complex JavaScript injections or n8n automation workflows locally. This ensures that a bug in your “system-based architecture” doesn’t tank a client’s live rankings.

- Automated Data Flows: Use your local machine’s resources to run heavy Python or Node.js scripts for Topical Mapping and Technical Audits. This bypasses the performance bottlenecks of cloud-based SEO tools.

- Performance Benchmarking: Test Core Web Vitals and site speed in a sandbox. Identifying regressions locally prevents damaging the Consumer Trust Signals (like reliability and speed) you identified in your research.

The Senior Director’s Perspective (ROI)

- Zero Downtime: Deploying “pre-vetted” code reduces the risk of site crashes, protecting revenue.

- Speed to Market: Local automation allows you to audit thousands of pages in minutes, significantly moving the needle on project timelines compared to manual browser-based methods.

Automating Topical Mapping

In an enterprise-level system, manual content planning is a bottleneck. Automated Topical Mapping uses JS-based scraping or n8n workflows to programmatically cluster related themes based on semantic relevance and user intent.

The Architect’s Blueprint: Topical Clusters

By moving from “keywords” to “entities,” you build a resilient site architecture that search engines recognize as an authority.

- Semantic Intelligence: Use machine learning to group content into “Pillar” and “Spoke” models. This signals deep expertise to algorithms, moving the needle on E-E-A-T.

- Reduced Bounce, Increased Trust: A logical internal linking structure (automated via script) allows users to navigate effortlessly. This reinforces the Consumer Trust Signals from your research by providing a cohesive, reliable journey.

- Dynamic Adaptation: Automated maps update as search trends shift. Instead of a static spreadsheet, your Windows 11 SEO Engine can re-cluster topics based on real-time GSC data.

Performance Governance: The ROI of Structure

- Efficiency: Automating the mapping process saves hundreds of manual hours, allowing for rapid scaling of B2B or SaaS content hubs.

- Ranking Power: Search engines reward “topical authority.” A structured map ensures Google understands your site’s core expertise, leading to higher rankings for competitive terms.

Real-Time Performance Reporting

In a system-based architecture, waiting for monthly reports is a strategic failure. Real-time performance reporting transforms SEO from a reactive task into a proactive growth engine.

Breaking the Manual Bottleneck

- Live Feedback Loops: Move away from historical data lags. Real-time dashboards allow for immediate pivots when algorithms shift or technical errors occur.

- Centralized Truth: A single source of live metrics eliminates silos, ensuring technical, content, and executive teams align on the same KPIs and ROI targets.

- Automation-First Insights: Using n8n or Python to aggregate search console and analytics data allows for instant trend forecasting and anomaly detection.

The Architect’s Perspective: Scalability & Trust

- Scalability: Automated reporting removes the “human bottleneck,” allowing you to monitor thousands of pages without increasing headcount.

- Consumer Trust: Rapidly identifying and fixing 404s or UX regressions protects the trust signals essential to your MS Research findings; a broken site is a broken promise to the user.

Consumer Trust Signals in SEO

In 2026, Consumer Trust Signals have evolved from “best practices” to mandatory ranking factors. Within a system-based architecture, these signals act as the data points that search engines—and AI agents—use to verify your brand’s legitimacy.

The System-Based Trust Framework

Instead of manual checklists, treat trust as a Performance KPI integrated into your technical stack:

- Identity & Security (The Baseline): Beyond HTTPS, search engines now prioritize Organization Schema and verified Knowledge Graph entities. If an algorithm cannot programmatically verify who you are, it won’t rank you.

- Social Proof Aggregation: Reviews and endorsements are no longer just “nice to have.” They are structured data points. Automating the collection and display of AggregateRating schema directly impacts CTR and visibility in AI-generated overviews.

- UX as Trust: High bounce rates and “pogo-sticking” are signals of low trust. Aligning site architecture with user intent ensures the “Experience” part of E-E-A-T is technically validated.

The Academic Bridge: AI Trust & SEO

Linking back to the research on AI-generated content and consumer trust:

- The Trust Gap: Users are increasingly skeptical of purely synthetic content.

- Verification Anchors: Combat this by using automation to insert “Human-in-the-loop” signals—verified author bios, real-world citations, and transparent data sources.

- The Result: High trust signals decrease perceived risk, directly moving the needle on Conversion Rate (CVR) and ROI, not just rankings.

Designing Automated Systems for E-E-A-T

In the 2026 SEO landscape, E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness) is no longer a set of manual checks—it is a technical gatekeeper. As AI-generated content saturates search results, search engines and AI answer engines (AEO) use these signals to differentiate between “synthetic noise” and “trusted authority.”

The Automation Framework for E-E-A-T

Instead of manual audits, high-performance systems use n8n workflows and API integrations to programmatically verify and boost trust signals.

- Experience & Expertise (Verification Nodes):

- Automated Schema: Use tools like AIOSEO or custom JSON-LD scripts to automatically inject “Person” and “Organization” schema that links authors to verified external profiles (LinkedIn, ResearchGate).

- Author Credential Scrapers: Deploy workflows to cross-reference author bylines against industry databases, ensuring every piece of content is backed by a recognized expert.

- Authoritativeness (Entity Mapping):

- Automated Content Audits: Use n8n with RapidAPI or MarketMuse to scan for “topical gaps.” Systems can automatically flag outdated statistics or research, triggering an update to maintain current authority.

- Citation Monitoring: Set up real-time alerts (via Brand24 or Mention) to track when your brand is cited as a source. If a mention lacks a backlink, automated outreach can secure that authority signal.

- Trustworthiness (Reputation Governance):

- Review Aggregation: Use ReviewTrackers or TrueReview to consolidate feedback across Google, Yelp, and Trustpilot. Automation can flag negative sentiment for immediate response, proving active engagement.

- Security & Compliance: Schedule automated scripts to check for HTTPS validity and presence of essential trust pages (Privacy Policy, Contact, About Us) across all subdomains.

Performance Governance: The ROI of Trust

From a senior director’s perspective, E-E-A-T is a risk mitigation and conversion strategy.

- Lower Churn: High trust signals decrease bounce rates and increase customer loyalty.

- AI Overview (SGE) Inclusion: AI engines are 40% more likely to cite sources with strong, verifiable E-E-A-T markers.

- KPI Shift: Move beyond “rankings” to “branded search volume” and “mention sentiment.”

Future Trends in SEO for 2026 and Beyond

The SEO landscape in 2026 has moved past traditional keyword-centric tactics, favoring a system-based architecture driven by AI and machine learning. To maintain a competitive edge, focus on these three core transformations:

1. AI-Driven Intent & Personalization

Search engines now prioritize context and intent over simple keyword matching.

- Machine Learning: Real-time algorithmic adjustments mean static content is no longer enough.

- Predictive Optimization: AI tools now forecast user needs, requiring content that solves specific problems rather than just targeting high-volume terms.

2. The Rise of Multimodal Search

Search is no longer just text-based. It is conversational and visual.

- Voice Search: With over 8 billion voice assistants in use, content must be structured for long-tail, natural language questions.

- Visual Search: AI-powered computer vision allows users to search via images, making high-quality, schema-rich visual assets mandatory.

3. Generative Engine Optimization (GEO)

As AI assistants (like Gemini and Perplexity) provide direct answers, visibility now depends on being a core knowledge source.

- Zero-Click Visibility: Optimize for “Position Zero” and featured snippets to ensure your brand is cited by AI agents.

- Brand Perception: Success is measured by how accurately AI systems associate your brand with its core expertise.

Conclusion: Embracing System-Based SEO

To stay competitive in 2026, marketers must pivot from isolated keyword tactics to a system-based SEO architecture that integrates technical performance, predictive data analysis, and user experience into a singular, automated engine. This holistic approach replaces siloed tasks with interconnected pillars—leveraging adaptive scalability to evolve alongside shifting search algorithms and data-driven predictivity to move from reactive fixes to proactive growth. By blending high-level strategic thinking with automated workflows, brands can move the needle on key ROI metrics, ensuring that every technical adjustment reinforces the consumer trust signals—such as expertise and reliability—that are essential for long-term website performance and engagement in an AI-driven landscape.